Topics for Essays on Programming Languages: Top 7 Options

Java Platform Editions and Their Peculiarities

Python: a favorite of developers, javascript: the backbone of the web, typescript: narrowing down your topic, the present and future of php, how to use c++ for game development, how to have fun when learning swift.

Delving into the realm of programming languages offers a unique lens through which we can explore the evolution of technology and its impact on our world. From the foundational assembly languages to today's sophisticated, high-level languages, each one has shaped the digital landscape.

Whether you're a student seeking a deep dive into this subject or a tech enthusiast eager to articulate your insights, finding the right topic can set the stage for a compelling exploration.

This article aims to guide you through selecting an engaging topic, offering seven top options for essays on programming languages that promise to spark curiosity and provoke thoughtful analysis.

"If you’re a newbie when it comes to exploring Java programming language, it’s best to start with the basics not to overcomplicate your assignment. Of course, the most obvious option is to write a descriptive essay highlighting the features of Java platform editions:

- Java Standard Edition (Java SE). It allows one to develop Java applications and ensures the essential functionality of the programming language;

- Java Enterprise Edition (Java EE). It's an extension of the previous edition for developing and running enterprise applications;

- Java Micro Edition serves for running applications on small and mobile devices.

You can explain the purpose of each edition and the key components to inform and give value to the readers. Or you can go in-depth and opt for a compare and contrast essay to show your understanding of the subject and apply critical thinking skills."

Need assistance with Java programming? Click " Java Homework Help " and find out how Studyfy can support you in mastering your Java assignments!

You probably already know that this programming language is widely used globally.

Python is perfect for beginners who want to master programming because of the simple syntax that resembles English. Besides, look at the opportunities it opens:

- developing web applications, of course;

- building command-line interface (CLI) for routine tasks automation;

- creating graphical user interfaces (GUIs);

- using helpful tools and frameworks to streamline game development;

- facilitating data science and machine learning;

- analyzing and visualizing big data.

All these points can become solid ideas for your essay. For instance, you can use the list above as the basis for argumentation why one should learn Python. After doing your research, you’ll find plenty of evidence to convince your audience.

And if you’d like to spice things up, another option is to add your own perspective to the debate on which language is better: Python or JavaScript.

If you are struggling with Python assignments? Click on " Python homework help " and let Studyfy provide the assistance you need to excel!

"This programming language is no less popular than the previous one. It’s even considered easier to learn for a newbie. If you master it, you’ll gain a valuable skill that can help you start a lucrative career. Just think about it:

- JavaScript is used by almost all websites;

with it, you can develop native apps for iOS and Android;

- it allows you to grasp functional, object-oriented, and imperative programming;

you can create jaw-dropping visual effects for web pages and games;

- it’s also possible to work with AI, analyze data, and find bugs.

So, drawing on the universality of JavaScript and the career opportunities it brings can become a non-trivial topic for your essay.

Hint: look up job descriptions demanding the knowledge of JavaScript. Then, compare salaries to provide helpful up-to-date information. Your professor should be impressed with your approach to writing."

Struggling with the Programming Review?

Get your assignments done by real pros. Save your precious time and boost your marks with ease.

"Yes, you guessed right - this programming language kind of strengthens the power of JavaScript. It allows developers to handle large-scale projects. TypeScript enables object-oriented programming and static typing; it has a single open-source compiler.

If you want your essay to stand out and show a deeper understanding of the programming basics, the best way is to go for a narrow topic. In other words, niche your writing by focusing on the features of TypeScript.

For example, begin with the types:

- Tuple, etc.

Having elaborated on how they work, proceed to explore the peculiarities, pros, and cons of TypeScript. Explaining when and why one should opt for it as opposed to JavaScript also won't hurt.

Here, you can dive into details as much as you want, but remember to give examples and use logical reasoning to prove your claims."

"This language intended for server-side web development has been around for a really long time: almost 80% of websites still use it.

But there’s a stereotype that PHP can’t compete with other modern programming languages. Thus, the debates on whether PHP is still relevant do not stop. Why not use this fact to compose a top-notch analytical essay?

Here’s how you can do it:

1. research and gather information, especially statistics from credible sources;

2. analyze how popular the programming language is and note the demand for PHP developers;

3. provide an unbiased overview of its perks and drawbacks and support it with examples;

4. identify the trends of using PHP in web development;

5. make predictions about the popularity of PHP over the next few years.

If you put enough effort into crafting your essay, it’ll not only deserve an “A” but will also become a guide for your peers interested in programming.

Did you like our article?

For more help, tap into our pool of professional writers and get expert essay editing services!

C++ is a universal programming language considered most suitable for developing various large-scale applications. Yet, it has gained the most popularity among video game developers as C++ is easier to apply to hardware programming than other languages.

Given that the industry of video games is fast-growing, you can write a paper on C++ programming in this sphere. And the simplest approach to take is offering advice to beginners.

For example, review the tools for C++ game development:

- GameSalad;

- Lumberyard;

- Unreal Engine;

- GDevelop;

- GameMaker Studio;

- Unity, among others.

There are plenty of resources to use while working on your essay, and you can create your top list for new game developers. Be sure to examine the tools’ features and customer feedback to provide truthful information for your readers.

Facing hurdles with your C++ assignments? Click on " C++ homework help " and discover how Studyfy can guide you to success!

"Swift was created for iOS applications development, and people argue that this programming language is the easiest to learn. So, how about checking whether this statement is true or false?

The creators of Swift aimed to make it as convenient and efficient as possible. Let’s see why programmers love it:

- first of all, because it’s compatible with Apple devices;

- the memory management feature helps set priorities for introducing new functionality;

- if an error occurs, recovering is no problem;

- the language boasts a concise code and is pretty fast to learn;

- you can get advice from the dedicated Swift community if necessary.

Thus, knowing all these benefits, you can build your arguments in favor of learning Swift. But we also recommend reflecting on the opposite point of view to present the whole picture in your essay. And if you want to dig deeper, opt for a comparison with other programming languages."

Learn How to Write a Compelling Essay with Python Programming Language

In today’s digital age, programming languages have extended their reach beyond traditional software development and into various domains. Python, a versatile and powerful programming language, has found its way into the realm of writing essays. This article aims to explore the intersection of Python and essay writing, addressing questions such as whether Python can write an essay, the characteristics of a Python programming language essay, the debate between Java and Python, tips for writing good code in Python, the best AI tools for essay writing, and how to achieve success in Python programming.

Can Python Write an Essay?

Python, being a programming language, is primarily designed to process and manipulate data, automate tasks, and build applications. While Python can assist in automating certain aspects of the essay-writing process, it is important to note that it cannot independently generate an entire essay from scratch. The creativity and critical thinking required for crafting an essay are inherent to human intelligence and are yet to be replicated by machines.

What is a Python Programming Language Essay?

A Python programming language essay refers to an essay that delves into the intricacies and applications of Python programming. It typically covers topics related to Python syntax, libraries, frameworks, and various use cases. Python essays serve as valuable resources for learners, enabling them to understand the language’s concepts and explore its potential.

Why Java is Better than Python?

The debate between Java and Python has long been a topic of discussion among developers. While both languages have their strengths and weaknesses, it is essential to consider the context and purpose of their usage. Java is known for its performance, robustness, and wide range of applications, particularly in enterprise-level software development. On the other hand, Python boasts a simpler syntax, ease of use, and a vast ecosystem of libraries and frameworks, making it an ideal choice for tasks like data analysis, web development, and artificial intelligence.

Writing Good Code in Python

To write good code in Python, it is crucial to follow best practices and adhere to certain principles. Here are a few tips to help you:

1. Maintain code readability: Python emphasizes readability with its clean and concise syntax. Use meaningful variable names, comment your code, and structure it in a logical manner.

2. Follow the PEP 8 style guide: PEP 8 provides guidelines for writing Python code. Adhering to these standards ensures consistency and improves code readability across projects.

3. Utilize modular and reusable code: Break your code into functions or classes that perform specific tasks. This promotes code reusability, readability, and easier maintenance.

4. Handle exceptions gracefully: Python provides robust error handling mechanisms. Utilize try-except blocks to catch and handle exceptions, making your code more resilient.

5. Test and debug your code: Thoroughly test your code to identify and fix any issues. Utilize debugging tools and techniques to streamline the debugging process.

The Best AI for Writing Essays

Artificial intelligence (AI) has made significant strides in natural language processing, including essay writing. Some notable AI tools for generating essays include OpenAI’s GPT-3, ChatGPT, and other language models. These models can assist in generating coherent text, providing ideas, and improving language fluency. However, it is important to remember that AI-generated content should always be used as a supplement and not a replacement for human creativity and critical thinking.

How to Be Successful in Python Programming

Becoming successful in Python programming requires dedication, practice, and continuous learning. Here are some tips to help you on your journey:

1. Start with the fundamentals: Develop a strong foundation by learning the basic syntax, data types, and control structures of Python.

2. Work on projects: Apply your knowledge to real-world projects. Building practical applications helps

reinforce concepts and improves problem-solving skills.

3. Engage with the community: Join online forums, participate in coding communities, and collaborate with other Python enthusiasts. Sharing ideas and experiences can accelerate your learning process.

4. Read code: Analyze and study well-written Python code. Understanding how experienced developers structure their code and solve problems can provide valuable insights.

5. Embrace documentation and resources: Python has extensive documentation and numerous online resources. Make use of them to deepen your understanding of the language and its libraries.

Python, although unable to independently write essays, can significantly aid in the essay-writing process through automation and data processing. Understanding the characteristics of a Python programming language essay can help learners utilize these resources effectively. Additionally, while the Java versus Python debate continues, both languages have their strengths depending on the task at hand. By following best practices, utilizing AI tools wisely, and embracing a growth mindset, you can embark on a successful journey in Python programming. So, dive in, explore, and leverage the power of Python to enhance your essay writing and programming skills.

Notice: While JavaScript is not essential for this website, your interaction with the content will be limited. Please turn JavaScript on for the full experience.

Notice: Your browser is ancient . Please upgrade to a different browser to experience a better web.

- Chat on IRC

What is Python? Executive Summary

I wrote a programming language. Here’s how you can, too.

By William W Wold

Over the past 6 months, I’ve been working on a programming language called Pinecone. I wouldn’t call it mature yet, but it already has enough features working to be usable, such as:

- user defined structures

If you’re interested in it, check out Pinecone’s landing page or its GitHub repo .

I’m not an expert. When I started this project, I had no clue what I was doing, and I still don’t. I’ve taken zero classes on language creation, read only a bit about it online, and did not follow much of the advice I have been given.

And yet, I still made a completely new language. And it works. So I must be doing something right.

In this post, I’ll dive under the hood and show you the pipeline Pinecone (and other programming languages) use to turn source code into magic.

I‘ll also touch on some of the tradeoffs I’ve had make, and why I made the decisions I did.

This is by no means a complete tutorial on writing a programming language, but it’s a good starting point if you’re curious about language development.

Getting Started

“I have absolutely no idea where I would even start” is something I hear a lot when I tell other developers I’m writing a language. In case that’s your reaction, I’ll now go through some initial decisions that are made and steps that are taken when starting any new language.

Compiled vs Interpreted

There are two major types of languages: compiled and interpreted:

- A compiler figures out everything a program will do, turns it into “machine code” (a format the computer can run really fast), then saves that to be executed later.

- An interpreter steps through the source code line by line, figuring out what it’s doing as it goes.

Technically any language could be compiled or interpreted, but one or the other usually makes more sense for a specific language. Generally, interpreting tends to be more flexible, while compiling tends to have higher performance. But this is only scratching the surface of a very complex topic.

I highly value performance, and I saw a lack of programming languages that are both high performance and simplicity-oriented, so I went with compiled for Pinecone.

This was an important decision to make early on, because a lot of language design decisions are affected by it (for example, static typing is a big benefit to compiled languages, but not so much for interpreted ones).

Despite the fact that Pinecone was designed with compiling in mind, it does have a fully functional interpreter which was the only way to run it for a while. There are a number of reasons for this, which I will explain later on.

Choosing a Language

I know it’s a bit meta, but a programming language is itself a program, and thus you need to write it in a language. I chose C++ because of its performance and large feature set. Also, I actually do enjoy working in C++.

If you are writing an interpreted language, it makes a lot of sense to write it in a compiled one (like C, C++ or Swift) because the performance lost in the language of your interpreter and the interpreter that is interpreting your interpreter will compound.

If you plan to compile, a slower language (like Python or JavaScript) is more acceptable. Compile time may be bad, but in my opinion that isn’t nearly as big a deal as bad run time.

High Level Design

A programming language is generally structured as a pipeline. That is, it has several stages. Each stage has data formatted in a specific, well defined way. It also has functions to transform data from each stage to the next.

The first stage is a string containing the entire input source file. The final stage is something that can be run. This will all become clear as we go through the Pinecone pipeline step by step.

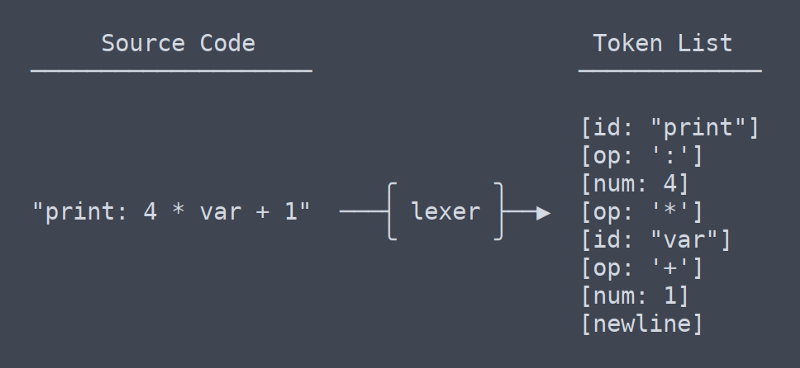

The first step in most programming languages is lexing, or tokenizing. ‘Lex’ is short for lexical analysis, a very fancy word for splitting a bunch of text into tokens. The word ‘tokenizer’ makes a lot more sense, but ‘lexer’ is so much fun to say that I use it anyway.

A token is a small unit of a language. A token might be a variable or function name (AKA an identifier), an operator or a number.

Task of the Lexer

The lexer is supposed to take in a string containing an entire files worth of source code and spit out a list containing every token.

Future stages of the pipeline will not refer back to the original source code, so the lexer must produce all the information needed by them. The reason for this relatively strict pipeline format is that the lexer may do tasks such as removing comments or detecting if something is a number or identifier. You want to keep that logic locked inside the lexer, both so you don’t have to think about these rules when writing the rest of the language, and so you can change this type of syntax all in one place.

The day I started the language, the first thing I wrote was a simple lexer. Soon after, I started learning about tools that would supposedly make lexing simpler, and less buggy.

The predominant such tool is Flex, a program that generates lexers. You give it a file which has a special syntax to describe the language’s grammar. From that it generates a C program which lexes a string and produces the desired output.

My Decision

I opted to keep the lexer I wrote for the time being. In the end, I didn’t see significant benefits of using Flex, at least not enough to justify adding a dependency and complicating the build process.

My lexer is only a few hundred lines long, and rarely gives me any trouble. Rolling my own lexer also gives me more flexibility, such as the ability to add an operator to the language without editing multiple files.

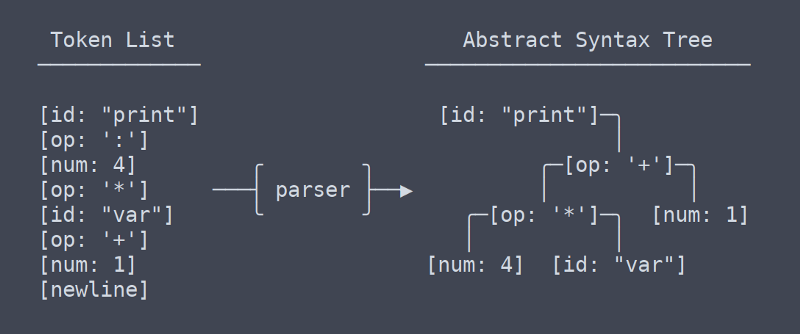

The second stage of the pipeline is the parser. The parser turns a list of tokens into a tree of nodes. A tree used for storing this type of data is known as an Abstract Syntax Tree, or AST. At least in Pinecone, the AST does not have any info about types or which identifiers are which. It is simply structured tokens.

Parser Duties

The parser adds structure to to the ordered list of tokens the lexer produces. To stop ambiguities, the parser must take into account parenthesis and the order of operations. Simply parsing operators isn’t terribly difficult, but as more language constructs get added, parsing can become very complex.

Again, there was a decision to make involving a third party library. The predominant parsing library is Bison. Bison works a lot like Flex. You write a file in a custom format that stores the grammar information, then Bison uses that to generate a C program that will do your parsing. I did not choose to use Bison.

Why Custom Is Better

With the lexer, the decision to use my own code was fairly obvious. A lexer is such a trivial program that not writing my own felt almost as silly as not writing my own ‘left-pad’.

With the parser, it’s a different matter. My Pinecone parser is currently 750 lines long, and I’ve written three of them because the first two were trash.

I originally made my decision for a number of reasons, and while it hasn’t gone completely smoothly, most of them hold true. The major ones are as follows:

- Minimize context switching in workflow: context switching between C++ and Pinecone is bad enough without throwing in Bison’s grammar grammar

- Keep build simple: every time the grammar changes Bison has to be run before the build. This can be automated but it becomes a pain when switching between build systems.

- I like building cool shit: I didn’t make Pinecone because I thought it would be easy, so why would I delegate a central role when I could do it myself? A custom parser may not be trivial, but it is completely doable.

In the beginning I wasn’t completely sure if I was going down a viable path, but I was given confidence by what Walter Bright (a developer on an early version of C++, and the creator of the D language) had to say on the topic :

“Somewhat more controversial, I wouldn’t bother wasting time with lexer or parser generators and other so-called “compiler compilers.” They’re a waste of time. Writing a lexer and parser is a tiny percentage of the job of writing a compiler. Using a generator will take up about as much time as writing one by hand, and it will marry you to the generator (which matters when porting the compiler to a new platform). And generators also have the unfortunate reputation of emitting lousy error messages.”

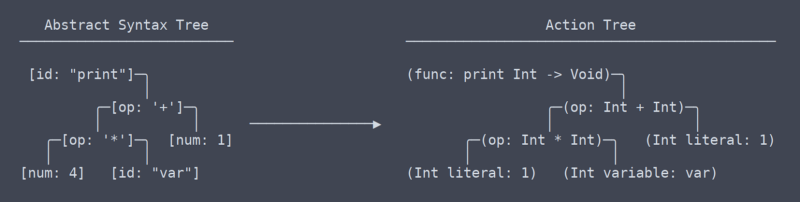

Action Tree

We have now left the the area of common, universal terms, or at least I don’t know what the terms are anymore. From my understanding, what I call the ‘action tree’ is most akin to LLVM’s IR (intermediate representation).

There is a subtle but very significant difference between the action tree and the abstract syntax tree. It took me quite a while to figure out that there even should be a difference between them (which contributed to the need for rewrites of the parser).

Action Tree vs AST

Put simply, the action tree is the AST with context. That context is info such as what type a function returns, or that two places in which a variable is used are in fact using the same variable. Because it needs to figure out and remember all this context, the code that generates the action tree needs lots of namespace lookup tables and other thingamabobs.

Running the Action Tree

Once we have the action tree, running the code is easy. Each action node has a function ‘execute’ which takes some input, does whatever the action should (including possibly calling sub action) and returns the action’s output. This is the interpreter in action.

Compiling Options

“But wait!” I hear you say, “isn’t Pinecone supposed to by compiled?” Yes, it is. But compiling is harder than interpreting. There are a few possible approaches.

Build My Own Compiler

This sounded like a good idea to me at first. I do love making things myself, and I’ve been itching for an excuse to get good at assembly.

Unfortunately, writing a portable compiler is not as easy as writing some machine code for each language element. Because of the number of architectures and operating systems, it is impractical for any individual to write a cross platform compiler backend.

Even the teams behind Swift, Rust and Clang don’t want to bother with it all on their own, so instead they all use…

LLVM is a collection of compiler tools. It’s basically a library that will turn your language into a compiled executable binary. It seemed like the perfect choice, so I jumped right in. Sadly I didn’t check how deep the water was and I immediately drowned.

LLVM, while not assembly language hard, is gigantic complex library hard. It’s not impossible to use, and they have good tutorials, but I realized I would have to get some practice before I was ready to fully implement a Pinecone compiler with it.

Transpiling

I wanted some sort of compiled Pinecone and I wanted it fast, so I turned to one method I knew I could make work: transpiling.

I wrote a Pinecone to C++ transpiler, and added the ability to automatically compile the output source with GCC. This currently works for almost all Pinecone programs (though there are a few edge cases that break it). It is not a particularly portable or scalable solution, but it works for the time being.

Assuming I continue to develop Pinecone, It will get LLVM compiling support sooner or later. I suspect no mater how much I work on it, the transpiler will never be completely stable and the benefits of LLVM are numerous. It’s just a matter of when I have time to make some sample projects in LLVM and get the hang of it.

Until then, the interpreter is great for trivial programs and C++ transpiling works for most things that need more performance.

I hope I’ve made programming languages a little less mysterious for you. If you do want to make one yourself, I highly recommend it. There are a ton of implementation details to figure out but the outline here should be enough to get you going.

Here is my high level advice for getting started (remember, I don’t really know what I’m doing, so take it with a grain of salt):

- If in doubt, go interpreted. Interpreted languages are generally easier design, build and learn. I’m not discouraging you from writing a compiled one if you know that’s what you want to do, but if you’re on the fence, I would go interpreted.

- When it comes to lexers and parsers, do whatever you want. There are valid arguments for and against writing your own. In the end, if you think out your design and implement everything in a sensible way, it doesn’t really matter.

- Learn from the pipeline I ended up with. A lot of trial and error went into designing the pipeline I have now. I have attempted eliminating ASTs, ASTs that turn into actions trees in place, and other terrible ideas. This pipeline works, so don’t change it unless you have a really good idea.

- If you don’t have the time or motivation to implement a complex general purpose language, try implementing an esoteric language such as Brainfuck . These interpreters can be as short as a few hundred lines.

I have very few regrets when it comes to Pinecone development. I made a number of bad choices along the way, but I have rewritten most of the code affected by such mistakes.

Right now, Pinecone is in a good enough state that it functions well and can be easily improved. Writing Pinecone has been a hugely educational and enjoyable experience for me, and it’s just getting started.

Learn to code. Build projects. Earn certifications—All for free.

If you read this far, thank the author to show them you care. Say Thanks

Learn to code for free. freeCodeCamp's open source curriculum has helped more than 40,000 people get jobs as developers. Get started

- Miscellaneous

How to Write an Essay for Programming Students

Programming is a crucial aspect of today’s technology-based lives. It complements the usability of computers and the internet and enhances data processing in machines.

If there were no programmers-and, therefore, no programs such as Microsoft Office, Google Drive, or Windows-you couldn’t be reading this text at the moment.

Given the significance of this field, many programming students are asked to write a paper about it, which makes them be looking for college essay services , and address their “where can I type my essay” goals.

However, if you’re brave enough to write your essay, here’s everything you need to know before embarking on the process.

What is Computer Programming

Computer programming aims to create a range of orders to automate various tasks in a system, such as a computer, video game console, or even cell phone.

Because our daily activities are mostly centered on technology, computer programming is considered to be crucial, and at the same time, a challenging job. Therefore, if you desire to start your career path as a programmer, being hardworking is your number one requirement.

Coding Vs. Writing

Writing codes that can be recognized by computers might be a tough job for programmers, but what makes it even more difficult is that they need to write papers that can be understood by humans as well.

Writing code is very similar to writing a paper. First of all, you should understand the problem (determine the purpose of your writing). Then, you should think about the issue and look for favorable strategies to solve it (searching for related data for writing the paper). Last but not least refers to the debugging procedure. Just like editing and proofreading your document, debugging ensures your codes are well-written.

In the following, we will elaborate more on the writing process.

Essay Writing Process

Writing a programming essay is no different from other types of essays. Once you get to know the basic structure, the rest of the procedure will be a walk in the park.

Write an Outline

An outline is the most critical part of every writing assignment. When you write one, you’re actually preparing an overall structure for your future work and planning for what you intend to talk about throughout the paper.

Your outline must have three main parts: an introduction, a body, and a conclusion, each of which will be explained in detail.

Introduction

The introductory paragraph has two objectives. The first one is to grab readers’ attention, and the second one is to introduce the thesis statement. Besides, it can be used to present the general direction of the subsequent paragraphs and make readers ready for what’s coming next.

The body, which usually contains three paragraphs, is the largest and most important part of the essay. Each of these three paragraphs has its own topic sentence and supporting ideas to justify it, all of which are formed to support the thesis statement.

Based on the subject and type of essay, you can use various materials such as statistics, quotations, examples, or reasons to support your points and write the body paragraphs.

Another important requirement for the body is to use a transition sentence at the end of each body paragraph. This sentence gives a natural flow to your paper and directs your readers smoothly towards the next paragraph topic.

A conclusion is a brief restatement of the previous paragraphs, which summarizes the writing, and points out the main points of the body. It conveys a sense of termination to the essay and provides the readers with some persuasive closing sentences.

Proofreading

If you want to get into an elegant result, the final work shouldn’t be submitted without rereading and revising. While many people consider it to be a skippable step, proofreading is as important as the writing process itself.

Read your paper out loud to spot any grammatical or typing errors. It’s also possible to pay a cheap essay service to check for your potential mistakes or have your friends to the proofreading step for you.

Essay Writing Tips for Programming Students

● Know your audience: Programming is a complex topic, and not everyone understands it well. Consider how much your reader knows about the topic before you start writing. In case you are using service essays, make the writers know who your readers are.

● Cover different technologies: There are so many programming frameworks and tools out there, and new ones seem to pop up every day. Try to cover the relevant technologies in your essay but do stay focused. You shouldn’t confuse your reader by dropping names.

● Pay attention to theory: Many programming students love to get coding and hate theoretical stuff. But writing an essay is an academic task, and much like any other one, it needs to be done based on some theory.

Bottom Line

People who decide to work as programmers need to be all-powerful because they should be able to write documents for both computers and humans. As for the latter, we offered a concise instruction in this article. However, if you are a programming student and have not fairly developed your writing skills or you lack enough time to do so, getting help from a legit essay writing service will be your best option.

How to Write an Essay Using Python Programming Language

The python programming language is one of a computer’s most highly readable languages, a major feature that has made it a go-to tool for writers. The python language uses clear English keywords, while other languages use punctuations and symbols in data structures. This makes for an easy flow and understanding of the codes.

What is Python?

Python language is a general-purpose programming language designed by Guido Van Rossum and developed by the Python Software Foundation. Majorly it is used in web development, software development, mathematics, and system scripting. For writing, it has a syntax that is very similar to the English language, which enhances readability in so many ways.

Journalists, social media marketers, SME owners, students, or any group of individuals who are looking for novel possibilities that would help them simplify their daily tasks, then you can develop an open source code written in Python for ease and convenience. There are several available resources that can help make this easier.

Generative Pre-Trained Transformer 3

The generated texts are practically indistinguishable from humans because the GPT 3 has higher parameters supporting higher writing comprehension. If you want to implement a Natural Language Processing tool into your daily life as a writer, then GPT 3 should be your go-to.

As we mentioned above, with 175 billion parameters, GPT 3 is still the most advanced language model created by OpenAl. It generates texts resembling a human and can be used by anyone ranging from attorneys to scientists, to mathematicians, to college students.

The GPT NEO might not be as strong as the GPT 3, but it is a close clone and equally sophisticated. What’s more? It’s open source, so anyone can access the standard library and use the code. The processes are not as cumbersome as the many advantages you will gain. Simply install Pytorch , download a generator, and then use the available writing commands to generate texts for your essays, articles, etc.

NLTK in Python

The NLTK is a computer program called the Natural Language ToolKit. It is mainly used to build programs that work closely with Natural Language Processing to develop codes closely related to human language.

For those who want to write with NLTK code, you must know that this doesn’t work with long texts because it isn’t the most advanced NLP tool. It will give accurate results for the first few hundred words and might get repetitive or odd as the texts get longer. It is, however, efficient for essays, landing pages, short articles, etc.

However, if you are tech-savvy, and are looking to do this yourself, get started by installing Python on your device, install NLTK with pip, and then start tokenizing by splitting up your texts into words or sentences.

We can see how far advancements in technology have gone in helping us simplify everyday tasks. Students, professors, journalists, and every other professional can now automate codes that will effectively write their essays by copying their unique and individual styles. From GTP 3 to GTP 2 to NLTK, professionals created these tools to assist and minimize stress.

We are also aware that not everyone is tech-inclined, so we appreciate the advancements that developed educational platforms where students can get professional human help on their assignments without using these tech tools.

Join our list

Subscribe to our mailing list and get interesting stuff and updates to your email inbox.

We respect your privacy and take protecting it seriously

- Frame WebVR Programming Tutorial

- Intervention Program Tutorials

- Doing Programming Tutorials

- Articles About Program Tutorials

- 📮 Contact Us

- 👮♂️ Privacy Policy

Crafting the Perfect Essay: A Programming Tutorial Approach

The connection between programming and essay writing.

At first glance, programming tutorials and essay writing might seem to occupy completely different spaces in the academic world. Yet, these two practices share more in common than you might think. Writing an essay can be akin to programming; both require meticulous planning, organizing thoughts (or codes) in a logical manner, and delivering a clear, concise output.

Just as you may seek a legit essay writing service when you need help crafting your essay, you might also turn to a programming tutorial when you’re stuck on a coding problem. Both provide structured guidelines and assistance to help you reach your goal.

Treating Your Essay like a Programming Project

Writing an essay can be broken down into five main steps, much like the process of creating a software program:

- Understanding the Task : The first step is akin to understanding the software requirements. In essay writing, you need to understand the question or prompt, just as in programming, you have to understand what the software should accomplish.

- Planning Your Response : Once you understand the task, plan your response. In essay writing, this involves brainstorming and outlining. Similarly, in programming, you design the software architecture before writing any code.

- Writing : The third step involves writing the body of your essay, just as you would write the code for your software. Each paragraph in an essay is like a block of code; it serves a specific purpose and fits into the overall structure.

- Revising and Editing : After writing, it’s time to revise and edit. In essay writing, this means refining your language and ensuring your argument is sound. In programming, this is similar to debugging the code and making improvements for better efficiency.

- Final Review : Finally, conduct a final review of your work. For essay writing, this includes proofreading and checking the formatting. For programming, it’s like testing the software to ensure it works as expected.

| Emoji | Action in Essay Writing | Equivalent Action in Programming |

|---|---|---|

| 💡 | Understanding the Task | Understanding the Software Requirements |

| 🗺️ | Planning Your Response | Designing the Software Architecture |

| 📝 | Writing the Essay | Writing the Code |

| 🛠️ | Revising and Editing | Debugging and Improving Code |

| ✔️ | Final Review | Testing the Software |

When to Seek Professional Help

While learning to write an essay or learning to program can be a rewarding process, it can also be challenging. That’s why resources exist to help you. If you need assistance with your essay, don’t hesitate to reach out to the best college essay writing service . These services can provide guidance and support, much like a programming tutorial can help you navigate a tricky coding problem.

It’s perfectly okay to hire an essay writer if you’re struggling with your workload. Just as in programming, where it’s normal to seek out a tutor or additional resources, it’s entirely acceptable to get help with your essays.

In choosing a service, don’t forget to read about the Best Essay Writing Services Online and consider the factors that are important to you, such as cost, quality, and reliability. There are services tailored to different needs, whether you’re looking to buy a cheap essay or want specific Essay help in UK .

Writing an essay is a process that requires a systematic approach, much like programming. By breaking down the task into manageable chunks, planning your response, and seeking help when necessary, you can write an essay that truly shines. Remember, you can always turn to a cheap essay writing service or an essay writing guide to ensure that you’re on the right track.

Finally, when you’re ready to share your masterpiece with the world, whether it’s an innovative piece of software or an engaging essay, don’t forget the final step – reviewing and polishing your work to ensure it’s the best it can be. Much like the satisfying feeling of a code running smoothly, there’s nothing quite like the satisfaction of a well-crafted essay. It’s all part of the exciting journey of learning, whether you’re delving into the world of programming or exploring the art of essay writing.

- Free themes

- Support Policy

- Vendy Themes

- Vendy Fashion

- Vendy Shopping

- Monstroid2 Top Multipurpose WordPress Theme

- Webion Minimal Elementor Multipurpose WordPress Theme

- Woostroid2 Multipurpose Elementor WooCommerce Theme

- BeClinic Multipurpose Medical Clean WordPress Theme

- Imperion Multipurpose Corporate WordPress Theme

- BuildWall Construction Company Multipurpose WordPress Theme

- Innomerce Business Multipurpose Minimal WordPress Elementor Theme

- Meltony Minimalist for Any Businesses WordPress Theme

- JohnnyGo Multipurpose Home Services WordPress Theme

- Get in touch

Writing a College Essay Using Python Programming Language

We all know Python as one of the most highly readable computer languages. It’s one of the most studied programming languages, as well as one in high demand when you’re looking for a job. But, did you know that you can write an essay with Python?

The Python language is different from others because it doesn’t use symbols and punctuation in data structures. It actually uses clear keywords in English, which is why it’s the perfect tool to write your essays .

Using Python to Write Your Papers: The Why and How

Of course, there’s another, much easier option to get that college paper on time. You can pay for essay writing to Edubirdie service. Edubirdie allows you to choose your essay writer as well as the price you’ll pay for the paper. If you do this, you won’t have to go through the trouble of writing a python program or crafting your essay from scratch. Thanks to a writing service, thousands of students in the world are meeting their deadlines and getting high grades without any effort – and without anyone knowing.

All you need to do to get your paper ready is order it from the writing company of your choice. As a result, you can sit back and relax while your assignment is written, and simply download it when it’s done.

You can complete your papers on time by doing this, of course. Still, if you want to learn programming alone by practicing your Python skills, you can use this as an opportunity to write an essay whilst using this language. It is no longer just developers who use Python. It’s simpler, which makes it great for newbie developers, as well as writers.

Instead of doing monotonous tasks like writing essays all the time, you can automate them by writing code using this programming language.

In that regard, let’s examine the variables you can use in order to accomplish this.

Use the GPT 3

The GPT 3 or the Generative Pre-Trained Transformer 3 is a highly advanced language prototype, great for writing tasks. Many applications were developed with this model that are now used for writing product descriptions, essays, articles, headlines, landing pages, etc.

The GPT 3 compares to the GPT 2 with 175 billion parameters (compared to 1.5 billion in the previous version). This makes the text generated with Python very similar to those created by humans.

Knowing this, if you want a program that has the highest potential of creating human-like content, this tool is what you need.

Use the NLTK in Python

Another option is the NLTK or the Natural Language ToolKit. This computer program is used to build Natural Language Processing programs and develop human-like codes. As you can imagine, it’s frequently used to create programs to write content.

Even so, you should be familiar with the downsides before you consider it. NLTK code works great with short texts, but it is not as advanced as the first option in this link, so it’s not ideal if you need long papers to write. It can create great essays and articles, but if you need hundreds of pages, it’s not ideal.

To create a program with it, you need to be tech-savvy and know how to install and create programs with NLTK. If you are, install Python followed by NLTK, and start tokenizing!

Use the GPT NEO

Finally, you can use the GPT NEO version. If you’re looking for an open-sourced alternative to the GPT 3, this is a great alternative. With GPT NEO, you do not have to access the application or wait for approval as you do with GPT 3. Millions of people don’t have access to the GPT 3.

If you are one of them or are looking for something more flexible, the NEO version is a good choice. Created by EleutherAI, it has a very similar architecture to the GPT 3 with 125 million parameters to match.

Since it is open-source, anyone can access and use this code, and creating programs will be much simpler. It’s an option to check out if you aren’t an expert in Python.

Wrapping Up about Python

Technology has advanced a lot and while programs cannot replace humans altogether, they certainly do a great job simplifying our tasks. If you are good at Python and have the time to create some code, use the tips here to make your essay writing much easier in the future!

Related posts

The Pros and Cons of Hiring Professional Essay Writing Services

Insights into Zemez WordPress Legacy – A Conversation with the WordPress Project Manager on ZEMEZ

We use cookies to improve your experience on our site. Please accept the privacy policy to continue.

Programming Languages Essay Examples

Programming languages refer to a set of keywords, phrases, and rules that help you to communicate with a computer system. An introduction to programming languages essay shows how these languages are instructions written to perform specific tasks. The most common examples of programming/coding languages are Ruby, Perl, COBOL, ALGOL, Python, Java, C, C++, C#, JavaScript, R, and PHP.

Every essay about programming languages must also detail the common types of languages and their uses. App developers, video game developers, web designers, and control systems engineers use the knowledge of coding in their daily work. If you are curious and motivated enough, you can learn how to code.

Also, it might prove difficult to find high-quality, simplified content about programming languages as an Art student. When you write your essays in programming languages, you’ll definitely need a solid guide to complete the perfect paper. Instead of whining like other students, avail yourself of the perfect outstanding coding-related paperwork online today.

The Spanish Empire was one of the successful empires in history. Of the global empires that existed in history, it was one of the largest. This empire dominated the battlefields in Europe. Its navy was quite experienced and this made it to be feared very much. The Spanish empire really colonized many nations. It carried […]

A generalized double diamond approach to the global competitiveness of Korea and Singapore H. Chang Moona,*, Alan M. Rugmanb, Alain Verbekec a Graduate Institute for International & Area Studies, Seoul National University, Seoul 151–742, South Korea b Templeton College, University of Oxford, Oxford OX1 5NY, UK c Solvay Business School, University of Brussels (V. U. […]

Usually, people start learning programming by writing small and simple programs consisting only of one main program. Here “main program” stands for a sequence of commands or statements which modify data which is global throughout the whole program. The main program directly operates on global data. It can be illustrated:This programming techniques provide tremendous disadvantages […]

When discussing company’s reputation in the light of the concept of managing interdependence, first we have to understand what is meant by managing interdependence. Global interdependence is a compelling factor in the global business environment creating demands on international managers to take a positive stance on issues of social responsibility and ethical behavior, economic development […]

In 2005, Cornish noted that the global apprehension about rising deculturation is a result of various factors including high mobility, swift changes in societies, economic growth and more. This trend has influenced education by leading to deculturation for individuals who move to foreign countries with diverse languages and cultures or those whose traditional cultures have […]

Executive Summary Disney and the Pirates of the Industry I. Introduction As a global company with high interest in both the music and film industries, it is essential that Disney deal with media piracy effectively. With Internet access increasing globally, piracy has the potential to create huge financial losses for Disney. In order to adequately […]

ComfortDelGro Corporation Limited is the world’s second largest public listed passenger land transport company with a fleet of 41,000 vehicles. The Group has gone global ever since the merger of Comfort Group and Delgro Corporation on 29th March 2003. ComforDelGro’s businesses include bus, taxi, rail, car rental & leasing, automotive engineering, maintenance services & diesel […]

Despite short-term expenses, we must continue to invest heavily in markets oversees because global expansion is the key to long-term sustainability. Secondly, we must continue to Innovate. This company was built on continuous Innovation, which enabled It to achieve low costs, outstanding customer service and lasting market share. We must continue to build out the […]

One, the Adani Group of India, a leader in International Trading and Infrastructure development with recent forays into Power, Infrastructure, Global Trading, Logistics, Energy and the other, the Wilmar International Limited of Singapore is Asia’s leading Agribusiness Group with its business interests spanning across Oil Palm cultivation, edible oil refining,oilseeds crushing,consumer pack edible oil processing and […]

Lansdowne chemicals, a company dealing in chemical production started its operations in 1977, but have grown overtime to diversify its business into a global chemical supplier. It has expanded its activities to include Nutrition, Aroma, Water Treatment and an assortment of flavor and fragrances. The brain behind the success of the company is George Watkinson-Yull, […]

1. What is the biggest competitive threat facing Careefour as it expands in global market? Careefour pioneer for the hypermarket had faced many competitive threats while expanding their global market; they featured many products like groceries, toys, furniture, fast food and also financial services, all under one roof. The first hypermarket was opened in 1963 […]

According to Yasin et al. (2003), the crucial development of the worldwide tourism industry drives the service economy and demands accommodations for both domestic and international travelers. Hotels are established to meet the expectations of tourists who require sustenance and shelter during their travels. The lines between tourism, travel, leisure, and hospitality blend smoothly, making […]

The aim is to produce a fully working calculator program that incorporates as many mathematical features as possible. The program is to be created using Delphi, an IDE based on the Pascal language. I personally prefer to program in C++ as I have quite a lot of experience with it. However, this seems a worthwhile […]

This paper explains the process that is required to create an eye-catching and successful website, or simply, web page. This includes the procedure of building the website and the tools needed to accomplish that goal. The instructions and ideas presented in this essay give a clear road map to anyone (beginner or experienced in the […]

The travel and tourism industry has placed Hilton at its forefront, with the hotel claiming to be the best in the industry. As a corporation, Hilton operates in 80 countries through the acquisition of various chain hotels. They boast of being one of the top entities in the corporate travel and conference market and aim […]

Dieser Report ist in zweierlei Hinsicht hilfreich. Er soll Menschen, die ihr Passwort verloren haben, die Mglichkeit geben, es durch Anwendung einfacher Techniken ohne lange Wartezeiten zurckzubekommen und Besitzern von Websites mit geschtztem Inhalt ermglichen, diese Inhalte zu schtzen. Webmaster, die die in diesem Report beschriebenen Techniken kennen, haben wesentlich bessere Aussichten, Ihre Website sicher […]

1. Describe the economic characteristics of the global motor vehicle industry. The 2008 financial crisis began in the American subprime mortgage crisis, eventually evolved into a global financial crisis. Most countries because of the impact of the financial crisis, leading to a sharp slowdown in consumer’s vehicle demand. Also, because of the financial crisis, the […]

Jollibee is the largest fast food chain in the Philippines, operating a nationwide network of over 750 stores. A dominant market leader in the Philippines, Jollibee enjoys the lion’s share of the local market that is more than all the other multinational brands combined. The company has also embarked on an aggressive international expansion plan […]

The underlying forces that led K&S’s need to make changes to its current supply chain network are because of the cheap labor. The geographical movement in the electronics manufacturing industry to Asia and other Pacific nations has implied that organizations have needed to update their inventory Network. With clients moving to Asia, and new markets […]

By tom betzinger From the wall street journal Sometimes, a perceived disability turms out to be an asset on the job. Though he is onli 18 years Old and bnlind, suleyman gokyigit (pronounced gok-yi-it) is among the top computer technicians and Progammers at intelidata technologies corp. , a large software company with several offices across […]

Algorithmic trading (also known as Black-box trading) refers to the use of automation for trading in financial markets. Simply put, it is computer-guided trading, where a program with direct market access can monitor the market and order trades when certain conditions are met. Earlier, the trading strategies were executed by humans but now it is […]

The Dyna Corporation (Dynacorp) operates global information and communication systems. Dynacorp became the industry leader in 1960’s and 1970’s by producing the technological innovations. Dynacorp was known as a company whose products were innovative and high-quality. That’s why, Dynacorp grew rapidly and spreaded to international markets in that period. In 1990’s, the industry and market […]

Popular Questions About Programming Languages

Haven't found what you were looking for, search for samples, answers to your questions and flashcards.

- Enter your topic/question

- Receive an explanation

- Ask one question at a time

- Enter a specific assignment topic

- Aim at least 500 characters

- a topic sentence that states the main or controlling idea

- supporting sentences to explain and develop the point you’re making

- evidence from your reading or an example from the subject area that supports your point

- analysis of the implication/significance/impact of the evidence finished off with a critical conclusion you have drawn from the evidence.

.png)

Top 15 Programming Languages Worth Learning

Intriguingly, Kotlin, a relatively recent addition to the programming scene, secured an official endorsement from tech giant Google as the preferred language for Android app development. This critical endorsement underscores the dynamic nature of the programming landscape in 2023. The tech industry is constantly evolving, driven by the emergence of increasingly advanced best AI writer tools , and this evolution brings with it a growing demand for highly skilled programmers. To navigate this ever-changing landscape, it's essential to acquaint yourself with the top programming languages that can elevate your career.

Top 15 Programming Languages: Short Description

This article delves deep into this ever-changing landscape to bring you insights into the most popular programming languages 2023 that are poised to dominate the digital realm. These languages, ranging from the versatility of Python to the precision of C and the innovation of languages like Zig and Ballerina, hold the keys to your success in the world of coding. We'll help you understand the strengths, use cases, and potential career opportunities associated with each of these programming languages, ensuring you can make informed decisions about your coding journey.

The Importance of Choosing the Right Programming Language

The importance of selecting the right programming language cannot be overstated. It's a decision that can significantly impact your efficiency as a developer and the success of your projects. Here's why:

- Project Compatibility: Different programming languages are suited for different types of projects. For instance, if you're working on web development, you might lean towards JavaScript, while data analysis tasks often require Python. The wrong choice can lead to unnecessary complications and hinder progress.

- Career Opportunities: Your expertise in a particular language can open or limit career opportunities. Industries and companies often have preferences for specific languages, so aligning your skills with market demands is essential for career growth.

- Project Efficiency: Some languages are more efficient for specific tasks. Choosing the right one can mean faster development, fewer bugs, and, ultimately, cost savings. On the other hand, selecting an inappropriate language can lead to lengthy development cycles and increased expenses.

- Community and Support: The community around a programming language can be a valuable resource. It provides access to libraries, frameworks, and a wealth of knowledge. Popular languages tend to have larger and more active communities, offering better support for troubleshooting and learning.

- Scalability: Consider the scalability of your project. Will it grow over time? Some languages are better suited for scalability than others. If you anticipate growth, it's crucial to choose a language that can accommodate it.

- Personal Preference: Your own preferences and coding style matter. Enjoying the process of coding in a particular language can significantly boost productivity and job satisfaction.

High-Level Programming Languages

High-level languages are the workhorses of modern software development, prized for their ease of use and versatility. Let's delve into three prominent types:

Python has evolved from a niche language into an absolute juggernaut in the programming world. Known for its readability and simplicity, As one of the high-level programming languages, it is often the first choice for beginners and seasoned developers alike. Here's why:

- Versatility: Python is incredibly versatile and capable of powering web applications, data analysis, machine learning, and more. Its extensive standard library and third-party packages make it an excellent choice for a wide range of tasks.

- Readability: Python's elegant syntax emphasizes readability, making it easy to understand and maintain code. This feature has contributed to its popularity among developers.

- Large Community: Python boasts a large and active community. This means abundant resources, libraries, and a helpful community that is always ready to assist.

Being one of the popular programming languages, JavaScript is the backbone of web development, responsible for the dynamic and interactive features you encounter on websites. Key points about JavaScript include:

- Client-Side Dominance: JavaScript primarily operates on the client side, making web pages more interactive and responsive. It's essential for building modern web applications.

- Node.js: With the advent of Node.js, JavaScript has expanded its reach to server-side development. This 'full-stack' capability has made JavaScript even more indispensable for web developers.

- Frameworks and Libraries: JavaScript has a vast ecosystem of frameworks and libraries like React, Angular, and Vue.js, which simplify front-end development.

C# is a high-level language developed by Microsoft, known for its versatility and applicability across various domains. Here's why C# stands out:

- Platform Independence: C# is not confined to Windows; it's cross-platform. With .NET Core (now .NET 6), you can develop applications for Windows, Linux, and macOS.

- Unity Game Development: C# is a staple in game development, particularly with the Unity engine. This makes it an attractive choice for aspiring game developers.

- Strongly Typed: C# is statically typed, which means it catches errors at compile-time, enhancing code reliability.

Functional Programming Languages

These programming languages are gaining prominence in the software development world, offering a different paradigm that emphasizes immutability, first-class functions, and a focus on declarative programming. Let's explore three notable examples:

Haskell is often regarded as the gold standard of pure functional programming languages. It's a language where mathematical rigor meets practical coding. Key features include:

- Immutability: Haskell encourages immutability, making it easier to reason about the behavior of code and preventing many common bugs related to mutable data.

- Type System: Haskell boasts a powerful and expressive type system that can catch many errors at compile-time, ensuring a high degree of code correctness.

- Lazy Evaluation: Haskell uses lazy evaluation, meaning it only computes values when needed. This can lead to more efficient code in some cases.

Scala combines functional and object-oriented programming seamlessly, offering the best of both worlds. Here's why Scala stands out:

- Compatibility with Java: Scala runs on the Java Virtual Machine (JVM), allowing for easy interoperability with Java code and access to the extensive Java ecosystem.

- Functional Features: Scala incorporates functional programming concepts like immutability and higher-order functions, which provide concise and expressive code.

- Concurrency: Scala has powerful concurrency libraries like Akka, making it an excellent choice for building highly concurrent and distributed systems.

Being one of the best programming languages to learn, Clojure is a Lisp dialect designed for the Java Virtual Machine (JVM), focusing on simplicity, immutability, and interactive development. Key attributes include:

- Simplicity: Clojure's minimalist syntax and functional approach make it a compelling choice for developers who appreciate clean and concise code.

- Immutable Data Structures: Clojure encourages the use of immutable data structures, which leads to safer and more predictable code.

- Concurrency: Clojure excels at managing concurrency with its software transactional memory (STM) system, making it suitable for building robust and scalable systems.

Low-Level Programming Languages

Low level programming languages are the bedrock of system programming, providing precise control over hardware and resources. Here, we delve into three influential low-level languages: C, C++, and Rust.

C is a legendary low-level programming language known for its simplicity, portability, and power. It has stood the test of time and continues to be a critical language for systems programming. Key aspects include:

- Portability: C was designed to be highly portable, allowing code to run on different platforms with minimal modification. This makes it an ideal choice for developing operating systems and embedded systems.

- Efficiency: C offers a high level of control over system resources, making it possible to write extremely efficient code. This efficiency is crucial for tasks where speed and resource utilization are paramount.

- Legacy: Many foundational software systems and libraries are written in C, cementing its significance in the programming world.

C++ builds on the foundation of C and extends it with object-oriented features. It offers both low-level control and high-level abstractions, making it a versatile choice for a wide range of applications. Highlights include:

- Object-Oriented: C++ supports object-oriented programming, enabling developers to model complex systems using classes and objects. This approach enhances code organization and reusability.

- STL (Standard Template Library): C++ comes with a robust standard library that includes containers, algorithms, and utilities. The STL simplifies many common programming tasks.

- Performance: C++ retains the performance advantages of C while adding high-level abstractions. This makes it suitable for projects where performance is crucial.

Being one of the fastest programming languages, Rust is a relative newcomer that has quickly gained attention for its emphasis on safety, memory management, and performance. Here's why Rust stands out:

- Memory Safety: Rust's borrow checker enforces strict rules about memory management, preventing common programming errors like null pointer dereferences and data races.

- Concurrency: Rust excels at handling concurrent programming with its ownership system, making it safe to write concurrent code.

- Performance: Despite its strong safety features, Rust delivers performance comparable to C and C++. It's an excellent choice for systems programming, where security and efficiency are paramount.

Specialized Carbon Programming Languages

In the ever-evolving field of technology, specialized carbon programming languages are emerging as cutting-edge tools that promise to shape the future of computing. Two prominent examples in this category are Carbon and Silicon.

Carbon is a groundbreaking programming language designed specifically for quantum computing. Quantum computers harness the mind-boggling properties of quantum mechanics to perform computations that were previously thought to be impossible. Key characteristics of Carbon include:

- Quantum Abstractions: Carbon abstracts complex quantum operations, making it accessible to a broader range of developers. It shields programmers from the intricacies of quantum mechanics while allowing them to leverage its power.

- Scalability: Carbon is built with scalability in mind, enabling developers to work on increasingly powerful quantum hardware as it becomes available.

- Quantum Simulators: Quantum computing is still in its early stages, but Carbon provides a means to simulate quantum algorithms on classical computers, allowing developers to experiment and innovate.

Silicon is another specialized programming language, but it's tailored for an entirely different realm of computing—neuromorphic computing. Neuromorphic systems aim to mimic the functioning of the human brain, opening doors to novel approaches in artificial intelligence and cognitive computing. Silicon offers:

- Neural Circuit Abstractions: Silicon simplifies the development of neuromorphic systems by providing abstractions for neural circuits. It allows programmers to model and implement brain-inspired algorithms more effectively.

- Energy Efficiency: Neuromorphic computing emphasizes energy efficiency, and Silicon is designed with this principle in mind. It enables the creation of energy-efficient systems capable of processing massive amounts of data in real time.

- AI and Robotics: Neuromorphic computing powered by languages like Silicon has the potential to revolutionize artificial intelligence, robotics, and other fields that require real-time, brain-like processing.

SQL Programming Languages

Structured Query Language, or SQL, is the backbone of database management systems (DBMS) and plays a pivotal role in handling and manipulating data. In this section, we'll explore SQL and its specialized extension, PL/SQL.

SQL is the de facto standard language for managing and querying relational databases. Its capabilities include:

- Data Retrieval: SQL allows you to retrieve specific data from a database with precision. It uses queries to filter, sort, and retrieve information from tables.

- Data Modification: SQL enables you to add, update, or delete data within a database. This is essential for maintaining data accuracy and integrity.

- Data Definition: SQL also provides commands for defining the structure of databases, including creating tables, specifying constraints, and defining relationships between tables.

- Multi-Platform Compatibility: SQL is supported by virtually all major relational database management systems, including MySQL, PostgreSQL, Oracle, and Microsoft SQL Server.

PL/SQL, or Procedural Language for SQL, is an extension of SQL introduced by Oracle Corporation. It adds powerful procedural capabilities to SQL, allowing developers to build robust database-driven applications. Key features of PL/SQL include:

- Procedural Logic: PL/SQL enables the creation of procedures, functions, triggers, and packages, adding a layer of procedural logic to SQL. This makes it possible to develop complex applications within the database.

- Error Handling: PL/SQL includes robust error-handling mechanisms, making it easier to identify and manage errors within database procedures and functions.

- Security: PL/SQL provides a secure environment for executing code within the database. It's a key component in ensuring the security and integrity of sensitive data.

- Performance Optimization: PL/SQL allows developers to optimize the performance of database operations by precompiling and storing procedures and functions within the database.

New and Emerging Programming Languages

In the ever-evolving landscape of coding languages, innovation is constant. Two notable new programming languages are Zig and Ballerina, each designed to address specific challenges in software development.

Zig is an open-source programming language that has gained traction for its focus on safety, simplicity, and performance in system-level programming. Here's why Zig is making waves:

- Safety First: Zig prioritizes safety, emphasizing predictable behavior, error prevention, and memory safety. It aims to eliminate common pitfalls like null pointer dereferences and buffer overflows.

- Efficiency: Despite its safety features, Zig doesn't compromise on performance. It allows developers to write code that is both secure and highly efficient, making it a promising choice for low-level system programming.

- Self-Contained: Zig aims to reduce reliance on external build systems and dependencies, simplifying the development process and enhancing portability.

- Growing Community: While relatively new, Zig has gained a growing and enthusiastic community of developers who appreciate its innovative approach to systems programming.

Ballerina is a cloud-native programming language designed to simplify the development of microservices and cloud applications. Key features that set Ballerina apart include:

- Integration-First Approach: Ballerina places integration at the forefront, providing built-in support for common integration patterns and protocols. This makes it easier to connect and communicate between services.

- Type-Safe: Ballerina is statically and strongly typed, which helps catch errors at compile-time, improving reliability and maintainability.

- Concurrency Support: Ballerina is built for concurrent and asynchronous programming, crucial for handling the demands of cloud-native applications.

- Rich Standard Library: Ballerina includes a rich standard library for common cloud operations like handling HTTP requests, interacting with databases, and working with REST APIs.

How to Choose the Best Programming Language to Learn

In the vast and diverse world of programming languages, selecting the right one to learn can significantly impact your career and project outcomes. To make an informed choice, consider these key factors:

Purpose and Goals:

- Determine your programming goals. Are you interested in web development, mobile app development, data science, or something else?

- If you're looking for a versatile language, consider a general-purpose programming language like Python, Java, or JavaScript.

Project Requirements:

- Tailor your choice to the specific project or industry you're interested in. For instance, if you want to develop mobile apps, focus on languages like Java (for Android) or Swift (for iOS).

- For data science and analytics, Python and R are top choices due to their rich libraries and support.

Object-Oriented or Scripting:

- Understand the paradigms of object-oriented programming and scripting languages. Object-oriented programming languages like Java and C++ are suitable for building large-scale applications, while a scripting language like Python excels at automation and rapid development.

Market Demand:

- Research the job market to identify which languages are in demand. General-purpose languages like Python and JavaScript often have a wide range of job opportunities.

- Be cautious about proprietary languages, as they may limit your job prospects to specific companies or industries.

Community and Resources:

- Check the size and activity of the language community. A vibrant community means more resources, libraries, and support.

- Popular languages like Python and JavaScript have extensive online resources, making learning easier.

Learning Curve:

- Consider the complexity of the language. Some languages, like Python and Ruby, are known for their readability and ease of learning, making them great choices for beginners when wondering how to study faster .

- Languages like C++ or Rust may have steeper learning curves but offer unparalleled control and performance.

Evolving Trends:

- Stay updated with industry trends. Emerging languages like Rust, Go, or Ballerina are worth exploring if they align with your interests and career goals.

- Keep an eye on the adaptability of your chosen language. Technologies evolve, and languages that can adapt easily to new trends tend to remain relevant.

Programming Languages Ranking:

- Consider learning languages that consistently rank high, as they often have robust ecosystems, job opportunities, and long-term viability. However, don't discount languages that are climbing the ranks, as they may represent emerging trends.

Our helpful guide has provided insights into a list of programming languages, from versatile general-purpose ones to specialized and emerging options. And should you ever find yourself in need, remember that you have the opportunity to simply ask us, ‘ do my programming homework for me ,’ and we'll ensure it's professionally completed.

Meanwhile, stay adaptable, stay curious, and remember that the world of coding is a realm of endless possibilities waiting to be explored!

Frequently asked questions

She was flawless! first time using a website like this, I've ordered article review and i totally adored it! grammar punctuation, content - everything was on point

This writer is my go to, because whenever I need someone who I can trust my task to - I hire Joy. She wrote almost every paper for me for the last 2 years

Term paper done up to a highest standard, no revisions, perfect communication. 10s across the board!!!!!!!

I send him instructions and that's it. my paper was done 10 hours later, no stupid questions, he nailed it.

Sometimes I wonder if Michael is secretly a professor because he literally knows everything. HE DID SO WELL THAT MY PROF SHOWED MY PAPER AS AN EXAMPLE. unbelievable, many thanks

New posts to your inbox!

Stay in touch

Home — Essay Samples — Information Science and Technology — Computer Programming — Python scripting language

Python Scripting Language

- Categories: Computer Programming Computer Software

About this sample

Words: 503 |

Published: Jan 4, 2019

Words: 503 | Page: 1 | 3 min read

Records of Python

Python functions.

- easy-to-learn - Python has few key phrases, easy shape, and a virtually described syntax. This lets in the scholar to select up the language speedy,

- clean-to-examine - Python code is more absolutely defined and seen to the eyes.

- smooth-to-keep - Python’s supply code is reasonably smooth-to-hold.

- a huge well-known library - Python’s bulk of the library could be very portable and cross-platform compatible on UNIX, windows, and Macintosh.

- Interactive Mode - Python has aid for an interactive mode which lets in interactive trying out and debugging of snippets of code.

- portable - Python can run on an extensive type of hardware structures and has the identical interface on all platforms.

- extendable - you may add low-degree modules to the Python interpreter. these modules permit programmers to add to or customise their gear to be more efficient.

- databases - Python gives interfaces to all predominant industrial databases.

- GUI Programming - Python helps GUI packages that can be created and ported to many device calls, libraries and home windows structures, which include windows MFC, Macintosh, and the X Window device of Unix.